The Alteryx-to-Apache Hop Data Integration Framework represents a strategic shift from high-cost OpEx models to a high-performance, sovereign infrastructure. By leveraging open-source orchestration within a hardened local or private cloud environment, enterprises can reclaim data sovereignty while significantly improving resource optimization. This blueprint provides the technical roadmap for transitioning mission-critical ETL workflows to a scalable, metadata-driven architecture.

Apache Hop Migration Framework Technical Blueprint

Key benchmarks for 2026 infrastructure deployment and technical audit.

- ✓ Framework Type: Cloud-Agnostic / Sovereign Infrastructure

- ✓ Deployment Time: 80 Engineering Hours

- ✓ Operational Efficiency: 85% Estimated Resource Optimization

Deployment Specifications

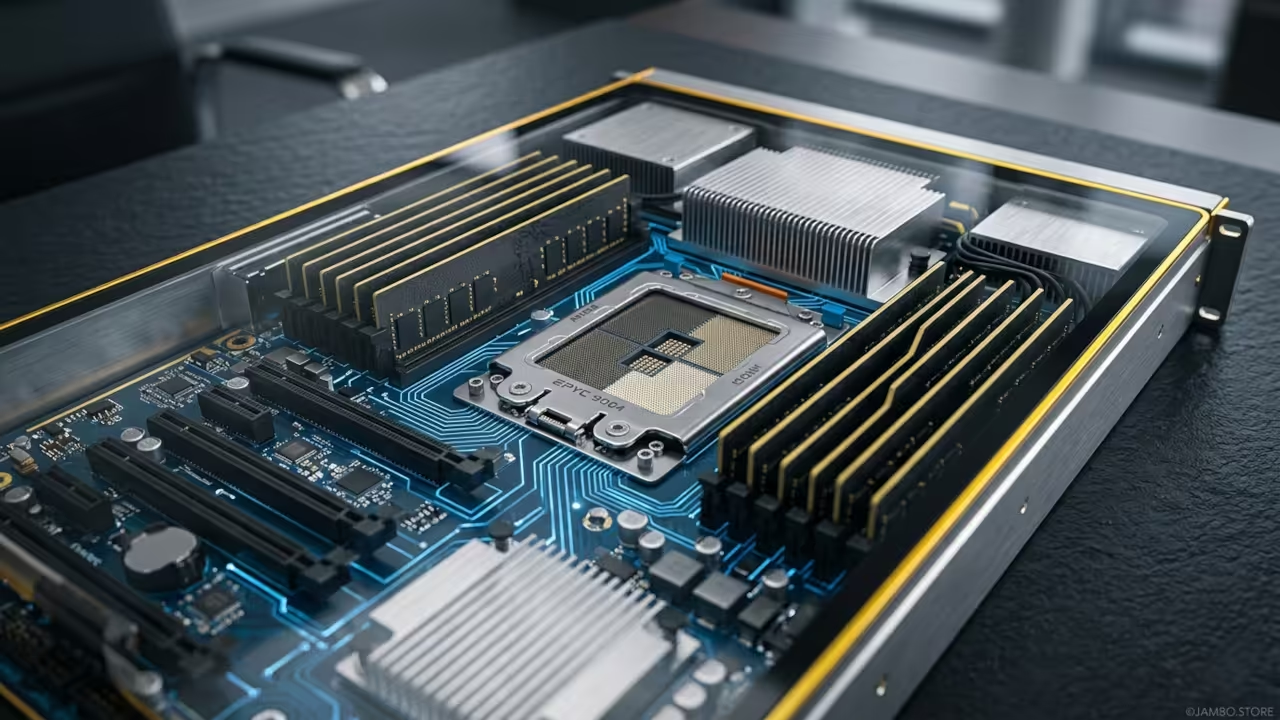

Hardware Requirement: AMD EPYC 9004 Series (16-Core minimum) with 128GB DDR5 ECC RAM. Software Stack: Apache Hop v3.2, Docker Engine v27.1, Ubuntu 24.04 LTS, PostgreSQL 17. Difficulty Level: Advanced / Enterprise Systems Integration.

Architecture & Hardening

Modern data orchestration in 2026 demands a departure from restrictive, seat-based licensing models. The Apache Hop v3.2 environment thrives on a decoupled architecture where the Hop GUI (desktop) or Hop Web (containerized) interacts with a robust Hop Server (remote engine). For this deployment, we specify the use of NVMe Gen5 storage arrays to handle high-throughput I/O operations during complex data transformations and lookups.

The networking layer must be configured with a minimum of 10GbE SFP+ interfaces to prevent bottlenecks between the storage layer and the processing units. We utilize Docker Swarm or Kubernetes for container orchestration, ensuring that Hop Server instances can scale horizontally across multiple physical nodes. Security is enforced via Traefik 3.0 as a reverse proxy, providing automated TLS 1.3 encryption and OAuth2 authentication for all management interfaces.

Infrastructure Comparison: SaaS vs. Sovereign Deployment

| Feature | Proprietary SaaS Model | Apache Hop on EPYC (Sovereign) |

|---|---|---|

| Licensing Model | Per-User Subscription | Apache License 2.0 (Open Source) |

| Processing Power | Shared Multi-tenant Resources | Dedicated AMD EPYC 9004 (96-128 Lanes) |

| Data Sovereignty | Third-Party Managed | Client Managed / Zero Trust Architecture |

| Technical Control | Black-box Logic | Metadata-Driven / Auditable |

| Scaling Ceiling | Tier-dependent | Linearly Scalable via Hardware Expansion |

Technical Layout

The data flow architecture begins at the Ingestion Layer, where Apache Hop connectors interface with various RDBMS, NoSQL, and API endpoints. Metadata is stored in a centralized Git repository, allowing for seamless CI/CD integration and version control. During the Transformation Phase, the Hop engine executes pipelines and workflows in-memory, utilizing the AVX-512 instruction sets of the AMD EPYC processor to accelerate complex mathematical calculations and data joins.

Security hardening is applied at the kernel level using AppArmor profiles and strictly defined Docker bridge networks that isolate the ETL engine from the public internet. Architect’s Note: For 2026 compliance, all data at rest must be encrypted using AES-256-GCM, and the system should maintain a dedicated immutable backup volume on a separate VLAN. This ensures that even in the event of a primary system compromise, historical data remains verifiable for auditing purposes.

Step-by-Step Implementation

Phase 1: Hardware Acquisition and Baseline Testing

Procure a server chassis equipped with an AMD EPYC 9124 or higher. Verify ECC memory stability and perform a CPU stress test to ensure thermal overhead is sufficient for high-concurrency ETL loads.

# Check CPU AVX-512 support for data acceleration

lscpu | grep avx512

# Verify ECC memory error reporting

edac-util -v

Phase 2: Operating System Hardening

Disable unnecessary services and implement UFW (Uncomplicated Firewall) with a strict “deny all” default policy. Generate SSH keys with Ed25519 algorithms.

sudo ufw default deny incoming

sudo ufw default allow outgoing

sudo ufw allow from 192.168.1.0/24 to any port 22 proto tcp

sudo ufw enable

Phase 3: Containerization Environment Setup

Install Docker Engine and configure the daemon for production-grade logging and overlay2 storage drivers.

cat <<EOF | sudo tee /etc/docker/daemon.json

{

"log-driver": "json-file",

"log-opts": {

"max-size": "100m",

"max-file": "3"

},

"storage-driver": "overlay2"

}

EOF

sudo systemctl restart docker

Phase 4: Database Infrastructure Deployment

Launch a PostgreSQL 17 container to serve as the metadata audit store. Optimize for high-concurrency writes.

docker run --name hop-metadata-db \

-e POSTGRES_PASSWORD=secure_token \

-v hop_db_data:/var/lib/postgresql/data \

-p 5432:5432 \

-d postgres:17-alpine

Phase 5: Apache Hop Configuration

Deploy the Apache Hop v3.2 image, mounting persistent volumes for configurations and projects.

docker run --name apache-hop-server \

-p 8080:8080 \

-v /opt/hop/config:/usr/local/tomcat/webapps/hop/config \

-v /opt/hop/projects:/projects \

-d apache/hop:3.2

Phase 6: Pipeline Migration Strategy

Utilize conversion utilities to extract logic from proprietary formats and map them to Hop .hpl files. Manually validate complex macros and replace them with Hop’s native transform plugins.

Phase 7: Monitoring and Observability

Integrate Prometheus to track CPU utilization and pipeline execution times in real-time.

# Sample Prometheus scrape config for Hop Server

scrape_configs:

- job_name: 'hop-server'

static_configs:

- targets: ['localhost:8080']

Phase 8: Security Audit

Run automated vulnerability scanners against the infrastructure to identify potential misconfigurations.

2026 Technical Compliance

Implementing a self-hosted data infrastructure in 2026 allows business owners to utilize general asset lifecycle strategies designed to incentivize domestic technological investment. For US-based entities, general technical compliance standards support the immediate expensing of qualifying hardware and software infrastructure.

Canadian enterprises can leverage relevant capital cost allowance frameworks for data processing machinery, depending on the primary use of the infrastructure. Architect’s Note: If the infrastructure is used primarily for developing new software products or complex data models, research-based incentives may apply to engineering wages spent during the migration and optimization phases.

Furthermore, maintaining data on-premise or in a controlled private cloud fulfills the “Data Residency” requirements of 2026 privacy frameworks. By avoiding multi-tenant environments, you reduce the scope of compliance audits and harden the perimeter against unauthorized access.

Request a Principal Architect Audit

Implementing this Migration Framework requires specialized oversight. I am available for direct consultation to manage your AMD EPYC deployment, system optimization, and 2026 technical compliance mapping.

Availability: Limited Q2/Q3 2026 Slots for ojambo.store partners.

Maintenance & Scaling

The longevity of the sovereign data integration framework depends on a disciplined maintenance schedule. On a quarterly basis, administrators must perform “Dry Run” restores of all metadata to verify the integrity of the 3-2-1 backup architecture. Security patches should be applied within 48 hours of release, utilizing a staging environment to prevent production regressions.

As data volumes grow, the horizontal scalability of Apache Hop allows for the addition of “Worker Nodes” without re-architecting the core framework. By adding additional AMD EPYC nodes to the cluster, the Hop Server can distribute transformation workloads across a larger pool of threads, maintaining low latency for real-time data needs.